Why claims AI has to be built around evidence

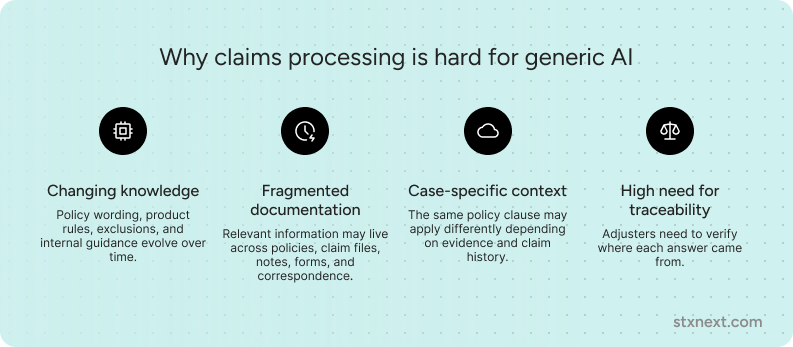

Claims processing is where AI confidence meets operational reality. An answer that sounds right has little value if an adjuster cannot verify which policy clause, document, or case detail supports it.

Policy wording changes, product rules evolve, exceptions accumulate, and historical claim records may conflict with current documentation. That makes claims a strong test of AI architecture: teams need answers that are fast, consistent, defensible, and grounded in current evidence.

Fine-tuning helps with stable, repeatable tasks such as document classification, routing, triage, and anomaly detection. Advanced RAG becomes essential when the system needs to answer questions using live documents, preserve context, and expose the evidence behind each response.

That was one of the main conclusions our AI team at STX Next reached while designing and testing a RAG system for insurance scenarios. Claims AI works best when repeatable work is handled by specialized models, while changing knowledge is supported by retrieval, verification, and source visibility.

Traditional RAG: The surface-level approach most products use

Most insurance products take a remarkably superficial approach to Retrieval-Augmented Generation. In my experience, these tools often rely on a single, rigid RAG method. They build an entire system around one basic technique rather than adapt to complex data. This lack of flexibility creates a significant hurdle for the complex world of insurance claims.

Key limitations of the "shallow" approach:

- Reliance on a single framework. Instead of an evaluation of the specific nature of a data set, these systems force every query through one pre-defined pipe.

- The context gap. When a tool fails to manage metadata effectively, it loses the thread of the information. This makes it nearly impossible to trace an answer back to a specific page or paragraph.

- Vulnerability to input errors. Standard systems often stumble over typos, shorthand, or queries stripped of context. They lack advanced paraphrasing or query expansion techniques, such as Multi-query or HyDE, which interpret what a human actually means.

- Absence of conflict verification. In a traditional setup, a mechanism like Consensus RAG rarely exists to check if different source documents provide contradictory information.

The core issue with these standard systems is the way they handle (or fail to handle) context. Many products scatter context because they neglect the importance of metadata. And without robust metadata storage, a system struggles to pin down precise information. This leads to a frustratingly common outcome i.e., general answers. A user asks a specific question about a policy or a claim, and the system returns a broad, surface-level response that lacks the necessary detail for professional decision-making.

Instead of this simplified model, a production-grade system for insurance claims processing must function as "multiple systems in one." True reliability requires deep file cleaning, hierarchical data structures like Raptor, and strict metadata management to ensure every decision remains transparent and auditable.

What a production-ready claims RAG system needs to do

Based on the work our AI team at STX Next carried out while building and testing an insurance-focused RAG system, the stronger approach is not a single retrieval pattern, but an architecture that can adapt to the data and the question being asked.

In claims processing, that flexibility matters. A query may require deep file cleaning, key-based search, metadata-aware retrieval, or more complex tree structures like RAPTOR when information is spread across long, interdependent documents. Simple semantic search is rarely enough when the system has to work across policy wording, claim files, internal guidance, forms, notes, and historical cases.

A production-ready RAG system for insurance claims therefore needs several layers to handle real-world messiness:

- Conflict detection. Through techniques such as Consensus RAG, the system can identify when source documents provide contradictory answers. This matters in claims analysis because policy documents, historical records, and internal guidance may overlap or disagree.

- Query intelligence. To handle typos, vague prompts, shorthand, or missing context, techniques such as Multi-query and HyDE can help expand a single question into several useful search angles.

- Transparency. Claims teams need to know where an answer came from. Every response should link back to a specific data chunk, with enough context to identify the relevant file, page, chapter, or section.

In some cases, a hybrid approach such as RAFT can also help the system handle industry-specific jargon while still relying on retrieval for current, source-based answers. For sensitive claims data, deployment model also matters: many insurers need the system to run on-premise or in another controlled environment where data access and auditability can be managed carefully.

By combining these layers, RAG becomes more than a search interface. It becomes a decision-support layer that can help claims teams work with complex, changing documentation while keeping the evidence visible.

Two design decisions that matter especially in insurance RAG

In our work on insurance-focused RAG, two elements turned out to be especially important: granular auditability and linguistic precision. These are the features that make the system easier to verify, control, and use in professional claims workflows.

1. Detailed source citation: beyond the document level

Many RAG systems struggle with context scattering. They may tell a user that an answer comes from a 200-page policy manual, but not where in that manual the relevant evidence appears. In claims processing, that is often not enough.

A more useful system should provide evidence at a more granular level. Instead of only naming a file, it should help the user locate the exact page, chapter, paragraph, clause, or data chunk that supports the answer.

This matters because adjusters need to justify decisions. When an answer is linked to verifiable source material, the user can check the reasoning, identify missing context, and reduce the risk of relying on a plausible but unsupported response.

2. Language centralization: preserving specialized jargon

Standard multilingual RAG setups can be too general for high-stakes industries. In insurance, law, or medicine, a word used in everyday conversation may have a specific professional meaning. If the system handles terminology inconsistently across languages or relies too heavily on broad semantic similarity, important nuance can be lost.

Language centralization helps reduce that risk. Instead of allowing the system to drift between languages and general meanings, the pipeline keeps language handling consistent from database construction to final prompting.

Because embedding-based retrieval depends on semantic similarity, inconsistent language handling can pull specialized terms toward broader, everyday meanings. Keeping the system within one linguistic framework helps industry-specific terms stay closer to their professional context.

This makes the system better at preserving the meaning of company-specific and industry-specific documentation, especially in workflows where a small linguistic difference can change how a policy condition is interpreted.

Claims processing as the ultimate RAG use case

RAG use in insurance claims processing stands as the textbook example of where advanced AI must excel. Unlike static industries, claims environments are chaotic; rules shift, policies update, and data lives in a sprawling "Data Lake" where ten different policies might have ten different sets of rules.

Navigating the "data mismatch" problem

The biggest challenge in a claims environment is inconsistency. It is common to come across scenarios where two documents provide conflicting answers. Our system addresses this with a dedicated testing layer.

The architecture identifies when data points "drift" apart. If a policy document and a historical claim record disagree, the system flags it immediately rather than guessing.

Take a complex case, like a broken leg in Thailand under specific conditions. By combining historical claim data with precise policy nomenclature, the system parses these "strange scenarios" to find the truth within the fine print.

Why fine-tuning often isn't enough

Many suggest fine-tuning a model on insurance data, but this fails the speed test. You cannot retrain a model every week just because a new policy launches. In claims, accuracy depends on the Retrieval-Augmented Generation framework because it pulls from live, updated documents.

However, I do use fine-tuning as a specialized "Router." Instead of burning expensive tokens on a large LLM to read every single scrap of paper, a smaller, fine-tuned model (like BERT) categorizes incoming documents. This creates a highly efficient pipeline where the heavy lifting is reserved for the most complex reasoning tasks.

Sovereignty and the audit trail

In claims processing, data never leaves the company infrastructure. Handling sensitive information (PHI and PII) requires an on-premise approach where every decision is auditable. If a claim is denied or flagged, I ensure there is a clear decision path back to the source. This transparency forces a level of rigor that protects both the insurer and the policyholder, turning a "black box" process into a clear, verifiable workflow.

RAG vs. fine-tuning: Choosing the winning strategy

Choosing between RAG and Fine-tuning depends on the business problem, how fast data changes, and the need for transparency. In professional, "production-ready" environments, I rarely view these as mutually exclusive. Instead, they function as two different gears in the same machine.

When RAG takes the lead

A retrieval-based system is a system of knowledge and evidence. It wins whenever accuracy and auditability are non-negotiable.

- RAG is the clear winner when policies and rules change weekly. Fine-tuning cannot keep up with this pace; constant retraining is both slow and prohibitively expensive.

- Fine-tuning is inherently opaque. A model cannot cite a specific source for a fact it "learned" during training. RAG wins in regulated sectors because every answer must link to a specific file, page, or chapter. This builds the trust necessary for professional use.

- Through mechanisms like Consensus RAG, the system identifies contradictions across different documents. This is a critical advantage in rag use in insurance claims processing, where documents often overlap or conflict.

When fine-tuning wins

Fine-tuning is a system of skills and classification. It excels at operational tasks where speed and pattern recognition matter most.

- Fine-tuning wins at the entry point of a data lake. Using a smaller, specialized model (like BERT) to categorize documents is much cheaper and faster than using a massive LLM for every simple task.

- This method is ideal for analyzing historical patterns to identify potential irregularities or scams.

- For repetitive actions – such as estimating standard payouts for minor, frequent events – fine-tuning on anonymized data is perfectly sufficient.

RAFT: The "golden mean"

In high-stakes industries like insurance or medicine, I often implement a hybrid approach called RAFT (Retrieval-Augmented Fine-Tuning). This allows the model to "study" specialized industry jargon (Fine-tuning) while maintaining the ability to cite the most recent documents (RAG).

When combined with an on-premise architecture, this ensures that sensitive data (PHI, PII) never leaves the company infrastructure. The result is a system that understands professional nomenclature, remains fully auditable, and stays completely secure.

How to approach RAG in insurance projects

RAG in insurance should not be treated as a plug-and-play layer added on top of documents. In most real environments, retrieval quality depends less on the model itself and more on how well the system reflects the structure, inconsistency, and operational context of the underlying data.

That is why insurance RAG projects should begin with the document landscape, not the interface. Different types of content require different handling. Some datasets need rigorous document cleaning and normalization. Others benefit from key-based indexing, metadata-aware retrieval, or hierarchical approaches such as RAPTOR, especially when information is spread across long, interdependent documents.

This is also where using a mature RAG framework or accelerator can help. Starting from proven components can shorten the path to a working system, reduce avoidable implementation work, and give teams a more reliable foundation for testing with real data. But the value does not come from using a ready-made layer alone. It comes from adapting that foundation to the organization’s policies, claims files, internal guidance, security requirements, and review workflows.

For insurance teams, a robust RAG approach should usually include:

- Consensus RAG. A verification layer that helps identify when different source documents provide conflicting information.

- Intelligent query expansion. Techniques that paraphrase or expand user prompts so the system can handle vague questions, shorthand, typos, or missing context.

- Metadata integration. Strong links between answers and source material, so each response can be traced back to a specific document, section, page, paragraph, or clause.

- Workflow integration. A way to make retrieved evidence useful inside the claims process, whether through a custom chat interface, internal tools, or integration with existing claims management systems.

The goal is not to add AI on top of insurance documentation for its own sake. The goal is to make current, traceable knowledge easier to use in the moments where claims teams need to compare evidence, interpret policy wording, and make decisions that may later need to be reviewed.

Conclusion

In claims processing, the strongest AI architectures usually combine fine-tuning and advanced RAG rather than treating them as competing options.

Fine-tuning is useful for stable, repeatable tasks such as document classification, triage, routing, and pattern detection. Advanced RAG is better suited to questions that depend on current policy documents, claim-specific context, source-level evidence, and auditability.

That distinction matters because claims workflows are built on changing documentation, fragmented evidence, and decisions that need to be defensible. A useful claims AI system should retrieve from live sources, preserve metadata, flag conflicting information where possible, and show users which documents support each answer.

For insurers exploring AI in claims, the most practical starting point is to map the workflow before choosing the model: which tasks are repeatable, which decisions depend on changing knowledge, where evidence needs to be reviewed, and where human oversight is essential.

If you are evaluating how RAG could support your claims process, we can help you assess your document landscape, identify the right retrieval strategy, and decide where AI can create value without weakening control, traceability, or compliance.