What a digital twin in manufacturing actually means

Digital twins have become a staple in conversations about modern production, but in manufacturing, the term often gets blurred. I’ve seen it being used to describe everything from machine dashboards to 3D layouts, which makes it harder to pin down what a digital twin in manufacturing actually is.

At its core, a digital twin connects a physical production process with a digital model that can simulate behavior, predict outcomes, and support operational decisions. Instead of just tracking what’s happening on the shop floor, it helps teams understand how changes like speed, temperature, or material inputs will affect performance.

Crucially, this doesn’t require modeling an entire factory upfront. That’s because, in manufacturing, value comes less from visibility, and more from the ability to test, predict, and improve how production actually runs.

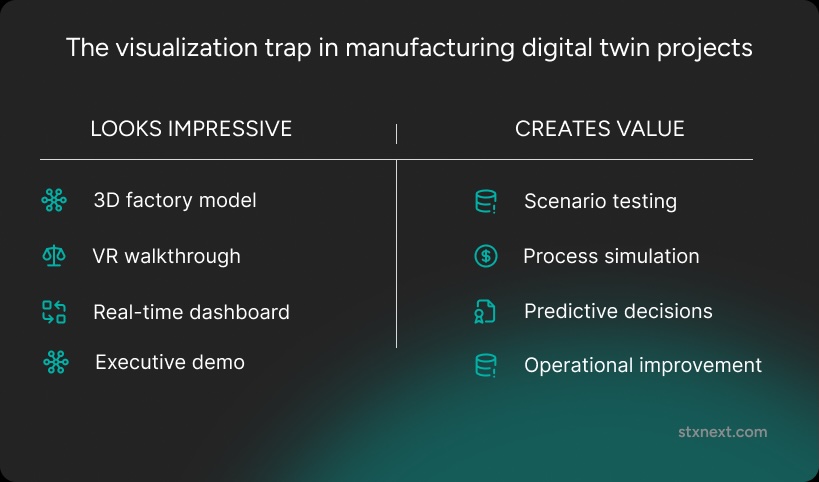

The visualization trap: How "Digital Twin" got misunderstood

There is a recurring phenomenon in the industry where labels are detached from reality. It is much like a company claiming to work in "Scrum" because employees are forced to explain their daily tasks, even if the actual process is nowhere near the methodology. In manufacturing, a similar naming convenience has hijacked the concept of the digital twin.

Often, what is presented as a twin is merely a "digital model" or a "digital shadow." The former is just a static geometric representation – useful for checking if a technician can reach a bolt in an assembly, but functionally dead. The latter is a dashboard that reflects real-time data, showing what is happening now without any predictive power.

To understand where the confusion comes from, it helps to separate three concepts that are often used interchangeably: a digital model, a digital shadow, and a digital twin:

This distinction matters because many manufacturing projects labeled as digital twins stop at the first or second level. They improve visibility, but they do not yet simulate how the process will behave or support decisions about what to change next. That is where the visualization trap begins.

The allure of pretty pictures

The "visualization trap" persists because it is easy to sell. In engineering circles, this is often called "colors for directors." Flashy 3D renderings and VR walkthroughs are impressive in boardrooms, but they often lack the underlying functional logic. If an initiative is driven from the top down, the pressure to deliver something visually "wowing" often outweighs the need for a system that can actually simulate production.

To move beyond a simple monitor, a true digital twin must align with the rigorous standard set by NASA’s John Vickers and Michael Grieves. They define it as:

"A set of virtual information constructs that mimics the structure, context, and behavior of a natural, engineered, or social system… is dynamically updated with data from its physical twin, has a predictive capability, and informs decisions that realize value."

Consequently, a legitimate implementation must incorporate:

- A process model. Not just how it looks, but how it behaves.

- Bidirectional data. Information flowing both ways between the physical and virtual worlds.

- Simulation capability. The power to run "what-if" scenarios.

The cost of choosing visualization over functionality is high. Investing in tooling that only makes processes easier to see – rather than easier to improve – results in fragile implementations. One blunt gut-check remains, if a system cannot answer "what happens if X is changed?", it is not a twin, but a monitor with a high price tag.

What a digital twin should actually do

I believe that digital twins in manufacturing should have three core capabilities:

- Scenario testing. A digital twin should let teams run “what if” analyses on the process. What happens if production speed increases? Will machines wear out faster or require more frequent cleaning? What if temperatures are pushed to their limits – and how long can the system operate under those conditions? Instead of guessing, teams must be able to see how the process would respond.

- Process simulation. A digital twin should model how the system behaves over time, combining physical models with data-driven predictions. This includes showing what will happen not only if something changes, but also if everything stays the same. That’s what makes it a natural extension of predictive maintenance. It helps anticipate future parameters and understand how different variables (like humidity, material quality, or machine settings) affect the end result.

- Decision support. This is where the real value comes together and turns simulations into actionable decisions. For example, combining separate models – like temperature and defect prediction – can help optimize for a specific outcome, such as minimizing energy consumption while keeping defects low.

Note: Neither of this requires building a full digital replica of the entire factory from day one. In fact, that approach is often too complex, expensive, and risky. A more effective strategy is to build smaller, modular components, where each delivers value on its own.

When is a digital twin in manufacturing a bad idea?

In my experience, not all digital twin use cases in manufacturing are good use cases. Going for this solution can be a bad idea when the goal isn’t clear or a simpler solution can solve the problem. Not every challenge requires a complex, real-time system.

Often, predictive maintenance models or standalone simulations are enough to forecast failures or test scenarios using historical data and process parameters. A digital twin only adds value when a real-time feedback loop matters, i.e., when the current state of machines or changing conditions directly affect outcomes. For example, in processes sensitive to factors like temperature or humidity, combining live data with simulation helps guide decisions.

If those factors don’t influence production, the added complexity doesn’t pay off.

Digital twin use cases in manufacturing

In manufacturing, value isn't found in aesthetic models but in tools that solve specific operational headaches. Implementing digital twin use cases in manufacturing should prioritize functional ROI over visual flair.

Predictive maintenance & safety

Instead of just alerting to potential failures, a twin models the downstream impact of a breakdown. A practical application involves monitoring internal temperatures in industrial furnaces. Rather than forcing technicians into heat-protective suits to manually measure 1200°C environments – a dangerous and imprecise task – a hybrid physics-based and data-driven model provides real-time estimates. This reduces manual checks from daily to once every few days, drastically increasing safety and operational uptime.

Implementing this level of foresight requires a robust foundation in predictive analytics in manufacturing, which transforms raw sensor data into the actionable insights needed to anticipate these failures before they occur.

Production line optimization

Before adjusting a physical line, a twin allows for testing scheduling changes or bottleneck fixes in a virtual space. This "what-if" capability ensures that new equipment configurations deliver the expected throughput without risking a single hour of actual downtime.

Quality control scenario modeling

Simulating how process variations affect final output allows for optimization without the cost of physical experiments. By adjusting variables in the twin, engineers identify the precise tolerances needed to maintain quality, reducing scrap rates and material waste.

Resource efficiency & training

Modeling energy consumption under different operational loads helps shift high-power tasks to off-peak times. Furthermore, the twin serves as a safe environment for operator training, allowing teams to test edge cases and emergency procedures without any risk to the physical assets or personnel.

You don't need to twin the entire factory

In my experience, one of the biggest blockers in manufacturing is the assumption that it means replicating the entire plant. In practice, that approach is a fast way to overcomplicate the project, increase risk, and lose control over costs.

Instead, manufacturing companies should start with a single component and build a model around it using only the data that actually matters. This makes it possible to validate the business case quickly and see real impact early on.

That’s what I’ve seen work best in practice. From there, the system can expand step by step.

There’s also a clear maturity curve. Most companies start with monitoring, so, simply collecting and structuring data to understand what’s happening. Then comes simulation, where that data is used to test scenarios and predict outcomes. They optimize much later, where systems begin to recommend or automate decisions. In reality, most companies operate somewhere between monitoring and early simulation, not at full autonomy.

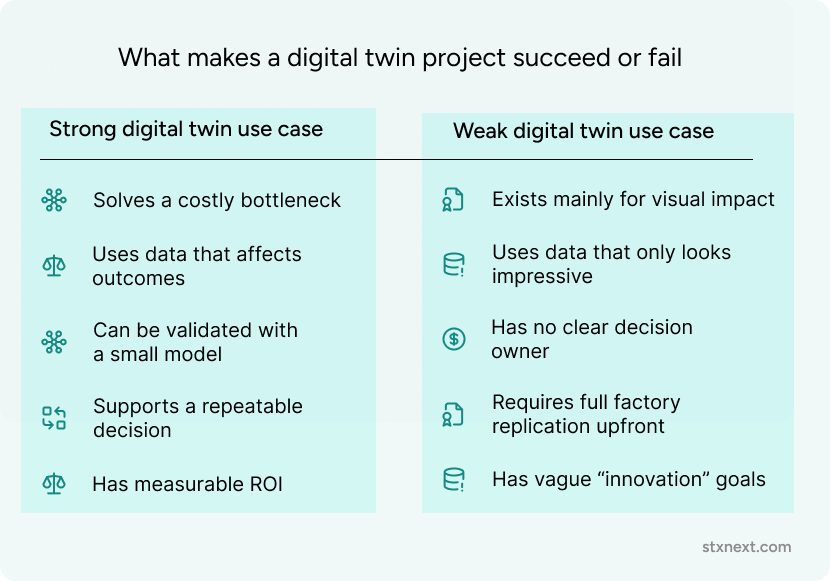

What makes a digital twin project succeed or fail

The difference between a high-ROI implementation and a stalled project often comes down to the motive. Many companies start with the desire for something "impressive" to show off, falling back into the "colors for directors" trap. Real success, however, is found in solving a specific headache, even if the solution looks underwhelming on a screen.

The "anti-value" of pure aesthetics

A project fails the moment it prioritizes the visualization layer over the data model. When an implementation is technically sound but yields no outcome, it is usually because it was built "to be cool" rather than to simplify a process. A successful digital twin in manufacturing should aim to eliminate or reduce the frequency of complicated, dangerous, or time-consuming manual operations – like the furnace measurements mentioned earlier. If the twin only provides a "pretty" version of what everyone already knows, it has failed.

The minimum viable twin

Before building, a team must audit three pillars:

- Problem identification. Does this solve a bottleneck or a safety risk?

- Data integrity. Are the sensors and historical logs available and clean?

- Modeling feasibility. Is the process understood well enough to be mathematically simulated?

Not everything that seems possible can actually be modeled. Starting from scratch requires a "one asset, one process" mindset. If a team cannot validate a small, effective model using basic numerical values, a complex 3D environment will only mask the underlying lack of utility.

How to choose the starting point

Deciding which process to twin first should be a pragmatic choice based on efficiency, not optics. The goal is to find a task that is currently problematic or resource-heavy. By building small "demos" and validating them with actual data, teams can prove the concept before scaling. Success is marked by a team knowing exactly how to act on the data provided. If the twin identifies a variance, and the team has a protocol to fix it, the project is delivering value. Without that loop, the twin is just a digital ornament.

Focus on the problem instead of the picture

The true value of a digital twin in manufacturing lies in its ability to enable safer, smarter, and faster experimentation. It is a tool for rapid hypothesis testing, not a cosmetic upgrade for existing processes. Before committing to months of development, a month of disciplined analysis can save a project from failure. This preparation phase is critical to determine if the available data is sufficient or too "leaky" to support a functional model.

Success starts by answering one fundamental question: Which specific problem will this twin solve? Whether the goal is to minimize service intervals in predictive maintenance, reduce production scrap, or optimize energy consumption, the objective must be defined first. Start small, validate the data, and prioritize effectiveness over visual flair.

Not every manufacturing problem needs a digital twin. If you’re evaluating whether the investment makes sense, we can help you assess your data, process complexity, and operational goals to identify a practical first use case, or recommend a simpler alternative when a full twin would add unnecessary complexity. Contact us and we'll be happy to hlep.